3.4. Shared Memory With Memory-mapped Files¶

A memory mapping is a region of the process’s virtual memory space that is

mapped in a one-to-one correspondence with another entity. In this section, we will focus

exclusively on memory-mapped files, where the memory of region

corresponds to a traditional file on disk. For example, assume that the address 0xf77b5000 is

mapped to the first byte of a file. Then 0xf77b5001 maps to the second byte, 0xf77b5002 to

the third, and so on.

When we say that the file is mapped to a particular region in memory, we mean that the process sets

up a pointer to the beginning of that region. The process can the dereference that pointer for

direct access to the contents of the file. Specifically, there is no need to use standard file

access functions, such as read(), write(), or fseek(). Rather, the file can be accessed

as if it has already been read into memory as an array of bytes. Memory-mapped files have several

uses and advantages over traditional file access functions:

- Memory-mapped files allow for multiple processes to share read-only access to a common file. As a straightforward example, the C standard library (

glibc.so) is mapped into all processes running C programs. As such, only one copy of the file needs to be loaded into physical memory, even if there are thousands of programs running.- In some cases, memory-mapped files simplify the logic of a program by using memory-mapped I/O. Rather than using

fseek()multiple times to jump to random file locations, the data can be accessed directly by using an index into an array.- Memory-mapped files provide more efficient access for initial reads. When

read()is used to access a file, the file contents are first copied from disk into the kernel’s buffer cache. Then, the data must be copied again into the process’s user-mode memory for access. Memory-mapped files bypass the buffer cache, and the data is copied directly into the user-mode portion of memory.- If the region is set up to be writable, memory-mapped files provide extremely fast IPC data exchange. That is, when one process writes to the region, that data is immediately accessible by the other process without having to invoke a system call. Note that setting up the regions in both processes is an expensive operation in terms of execution time; however, once the region is set up, data is exchanged immediately. [1]

- In contrast to message-passing forms of IPC (such as pipes), memory-mapped files create persistent IPC. Once the data is written to the shared region, it can be repeatedly accessed by other processes. Moreover, the data will eventually be written back to the file on disk for long-term storage.

3.4.1. Memory-mapped Files¶

Three functions provide the basic functionality of memory-mapped files. The mmap() and

munmap() functions are used to set up or remove a mapping, respectively. Both functions take a

length parameter that specifies the size of the region. The mmap() function also includes

parameters for the types of actions that can be performed (prot), whether the region is private

or shared with other processes (flags), the file descriptor (fd), and the byte offset into

the file that corresponds with the start of the region (offset). The addr parameter for

mmap() is typically NULL, allowing the system to determine the address of the region. For

munmap(), the addr must be the start of the memory-mapped region (which is the value

returned by mmap()).

C library functions – <sys/mman.h>

void *mmap (void *addr, size_t length, int prot, int flags, int fd, off_t offset);- Map a file identified by fd into memory at address addr.

int munmap (void *addr, size_t length);- Unmap a mapped region.

int msync (void *addr, size_t length, int flags);- Synchronize mapped region with its underlying file.

One key issue with memory-mapped files is the timing of when updates get copied back into the file

on disk. For instance, if another process opens and reads the file using read(), will this other

process have access to any updates that were written to the memory-mapped region? The answer is that

it depends on a number of timing factors.

The first factor is the kernel itself. When a file is mapped into memory with mmap(), the kernel

will occasionally trigger a write to copy updated portions back to disk. This write can occur for a

number of reasons and cannot be predicted. A second factor is the file system of the underlying

file. Some file systems do not commit changes to the file until the writing process has closed its

connection to the file. If the writing process still has the file mapped into memory, its connection

must still be open; as a result, no other process would be able to access any updates written to the

memory-mapped file.

Processes can insert control over this issue by using the msync() function. This function takes

a flags parameter that can initiate a synchronous, blocking write (MS_SYNC) or an

asynchronous, non-blocking one (MS_ASYNC). In the case of the asynchronous write, the data will

get copied to disk at a later point; however, the updated data would be immediately available to any

process that reads from the file with read().

3.4.2. Region Protections and Privacy¶

When setting up a memory-mapped file, the process must specify the protections that will be

associated with the region (prot). Note that these protections only apply to the current

process. If another process maps the same file into its virtual memory space, that second process

may set different protections. As such, it is possible that a region marked as read-only in one

process may actually change while the process is running. Table 3.2 identifies the protections

that can be combined as a bit-mask.

| Protection | Actions permitted |

|---|---|

| PROT_NONE | The region may not be accessed |

| PROT_READ | The contents of the region can be read |

| PROT_WRITE | The contents of the region can be modified |

| PROT_EXEC | The contents of the region can be executed |

Memory-mapped regions can also be designated as private (MAP_PRIVATE) or shared

(MAP_SHARED). When calling mmap() exactly one of these two options must be specified for the

flags parameter. If a region is designated as private, any updates will not be visible to other

processes that have mapped the same file, and the updates will not be written back to the underlying

file.

Code Listing 3.6 shows how to map and unmap a file into memory. In this example, we are opening the

/bin/bash executable file on Linux. Linux executables are formatted using the executable

and linking format (ELF) specification. Part of this specification indicates that the first byte of

the file must be 0x7f and the next three bytes are the ASCII characters for ELF. This

program snippet confirms that bash on Linux is a valid ELF file. (It should be.)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 | /* Code Listing 3.6:

Read the first bytes of the bash executable to confirm it is ELF format

*/

/* Open the bash ELF executable file on Linux */

int fd = open ("/bin/bash", O_RDONLY);

assert (fd != -1);

/* Get information about the file, including size */

struct stat file_info;

assert (fstat (fd, &file_info) != -1);

/* Create a private, read-only memory mapping */

char *mmap_addr = mmap (NULL, file_info.st_size, PROT_READ, MAP_PRIVATE, fd, 0);

assert (mmap_addr != MAP_FAILED);

/* ELF specification:

Bytes 1 - 3 of the file must be 'E', 'L', 'F' */

assert (mmap_addr[1] == 'E');

assert (mmap_addr[2] == 'L');

assert (mmap_addr[3] == 'F');

/* Unmap the file and close it */

munmap (mmap_addr, file_info.st_size);

close (fd);

|

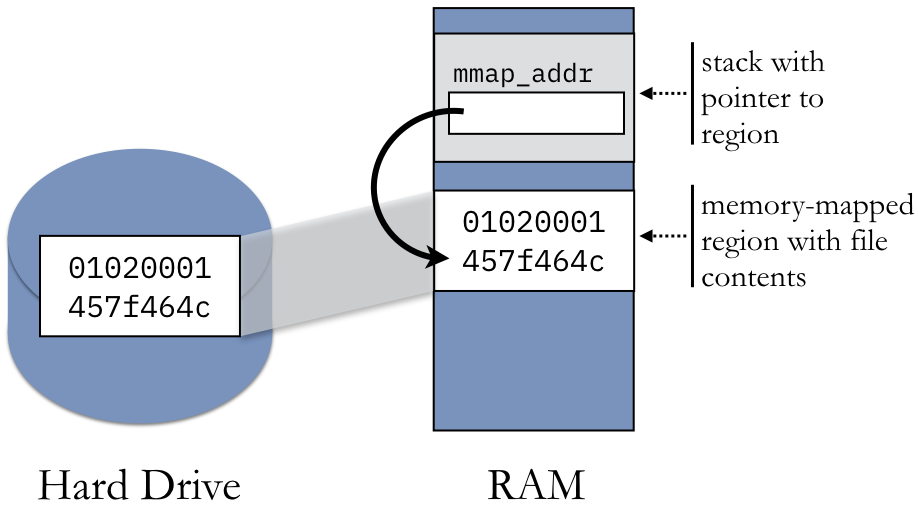

Figure 3.4.2 illustrates the structure of the memory-mapped file in Code Listing

3.6. The file’s original contents are stored on the hard drive. When the file is mapped into memory,

the process has a region of memory that corresponds to the exact structure of the file, creating the

appearance that the file’s contents have been copied into memory. The call to mmap() returns a

pointer to this region, which can be accessed as an array of bytes.

| [1] | Bypassing the kernel’s buffer cache can also be a disadvantage if other processes access

the file using the traditional read() function. Specifically, both processes (one with the

memory map and one without) will cause the data to be copied from disk into memory. Making the

second transfer from disk into memory is significantly slower than making a duplicate copy from the

buffer cache (which is in memory). Consequently, both processes would take a performance hit for

loading the file from disk, even though the process using the memory-mapped file would experience

slightly less impact. |