Project 3 - OpenMP Application

Introduction

The primary purpose of this assignment is to gain experience with multicore parallelism using the OpenMP library. The secondary purpose is to gain experience parallelizing dense matrix operations.

Description

You will develop an optimized, parallel version of a provided program. The project distribution is available on the cluster at /shared/cs470/p3-openmp.tar.gz.

The program generates a random system of linear equations in the form of a coefficient matrix (A) and a right-hand-side column vector (b). The size of the system (n) is given as a command-line parameter to the program; note that the actual problem size scales quadratically with the input size because matrix A is of size (n x n). It then solves the system using Gaussian elimination and backwards substitution. The system is generated in such a way the solution (x) will be a column vector with all values equal to 1.0, making it easy to check the answers. This assignment is similar to programming assignments 5.4 and 5.5 in your textbook, and it is the same basic approach that is used in the industry standard Linpack benchmark for high-performance computers.

The serial code contains several functions as well as a few preprocessor directives to toggle program features on/off. For this assignment, you must efficiently parallelize (using OpenMP) all functions that can benefit from parallelization. In particular, you should focus on rand_system, gaussian_elimination, and the two back_substitute functions. Do not attempt to parallelize read_system because it reads from standard input.

RESTRICTIONS: You should not use Pthreads, semaphores, or any other concurrency/parallelization system. You may perform minor re-writes within functions in order to be able to more effectively parallelize them, but you should avoid any rewrites that cut across multiple functions or that fundamentally change the nature of an individual function. Both the serially-compiled and 1-thread OpenMP versions should produce the exact same numeric results as the serial version. For the higher thread counts, it is ok for the randomly-generated matrices to be different from the serial version, but the computed solutions should still be accurate (I suggest seeding the RNG in each thread using the thread ID). Finally, the behavior of the functions mentioned above should be independent of how they are called in main(); i.e., you should not have OpenMP parallel regions surrounding their call sites.

In your code documentation, you should include a discussion of your changes and why you made them. This assignment is more open-ended than the first two projects, but the amount of code you actually write should be rather small in the end. Thus, you must include documentation explaining your experiments and the reasoning behind your final submission. You should include an analysis of the memory access patterns in the original program and any loop-carried dependencies that inhibit parallelism. You should include performance results, a discussion of scaling trends and their relationship to the algorithm, and an argument as to why your version is the optimal way to parallelize the original code. IMPORTANT: "I tried it and it didn't run faster" is NOT a sufficient explanation! If you were unable to parallelize a particular function, explain what you tried and discuss reasons why it may not have worked well. If possible, suggest ways to improve the code to increase parallelism.

You may include your analysis as comments at the top of your submitted file, but I strongly encourage you to write your analysis using LaTeX or some other document preparation system, and then upload it somewhere and just provide the URL in your submission. This allows you to more easily include tables and graphs.

Use the "-d" command-line switch to toggle debug output. This

output will likely be unhelpful for matrices larger than 10x10. Use the

"-t" command-line switch to generate the coefficient matrix already

in upper-triangular form, removing the need to do Gaussian elimination. Enable

the USE_COLUMN_BACKSUB definition to enable the alternative back

substitution method (column-oriented vs. the standard row-oriented). Finally,

change the REAL macro if you wish to experiment with using single

precision instead of double precision.

For your final submission, debug mode must be disabled by default (e.g., no

debug output unless "-t" is used), and REAL must be

set to "double". You may leave either version of the

back-substitution function enabled, and you should justify your choice in your

analysis.

You should use compiler directives to disable all OpenMP code if the

compiler does not support it (HINT: check for "_OPENMP"). I have

provided a Makefile that builds three different programs: 1) the original serial

version, 2) the new parallel version, and 3) the new parallel version with

OpenMP disabled. The parallel version should use omp_get_wtime()

instead of gettimeofday() for performance timing. I have provided

timing macros that should work for this; you should not modify the timing code.

Your program should respect the OMP_NUM_THREADS environment

variable, and there is no need to accept the number of threads as a command-line

parameter. However, you do need to change the output code to print the correct

number of threads at the end.

You should use the cluster to do development and testing. However, please be considerate and do not run your tests on the login node directly. I suggest writing a test script that runs your program using various thread counts, then converting this script into a Slurm job script so that it can run on the compute nodes.

If you would like to test with some custom (non-randomized) matrices, you

can pass a filename to the program instead of a system size. I have supplied a

function (read_system) that handles the file I/O. The files should

contain a line at the top with a single integer n (the size of the system).

Subsequent lines should have rows of the augmented matrix [A][b]. Keep in mind

that the calculated "error" is irrelevant for non-randomized matrices because

the system was not generated in a way that guarantees the answers will be all

1's.

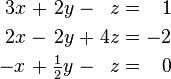

I have provided a single test file (matrix.txt) that contains the following system (taken from the Wikipedia article):

3 3.0 2.0 -1.0 1.0 2.0 -2.0 4.0 -2.0 -1.0 0.5 -1.0 0.0

The solution to this system is [ 1.0 -2.0 -2.0 ].

HINT: Understanding the data access patterns of the original code is crucial for correctly parallelizing it. Make sure you understand how these algorithms work before you attempt to begin writing new code.

HINT: Test with larger matrices! You won't begin to see true performance trends until you start testing on matrices larger than 500x500. I suggest testing with even larger matrices (at least 2000x2000 or 5000x5000 or even larger). Remember that you can use the "-t" option to disable gaussian elimination in order to test the other functions with larger matrices in a reasonable amount of time.

Grading Criteria

Your submission will be graded on a combination of correctness, functionality, elegance, style, and documentation. Here is a tentative grading rubric:

| Grade | Description | Requirements |

|---|---|---|

| A | Exceptional |

|

| B | Good |

|

| C | Satisfactory |

|

| D | Deficient |

|

| F | Unacceptable |

Submission

You should submit ONLY your modified par_gauss.c on Canvas by

the due date. This file must compile and run on the cluster with the original

makefile and timer.h, so I advise you not to change them.

All submitted code should be elegant, clean, consistent, and well-documented. The code I have provided uses 4-space indentation and the 1TBS style. If you choose to reformat the file to match your own style preferences, make sure you apply the changes throughout.

Peer Reviews

One of the goals of this course is to encourage good software development practices, especially when building a parallel or distributed software system. For this project, you will be assigned two other random students in the course. You must review their code and offer constructive feedback according to the given rubric.

After the submission deadline, I will assign you two other submissions to review on Canvas. I expect you to review two distinct submissions that are not your own; I will do my best to ensure that the assignments work out but sometimes Canvas does not cooperate. It is your responsibility to inform me immediately if you have been assigned yourself, your partner, or two other same-team partners to review.

To complete the peer review, submit a Canvas comment on the submission with your assessment of the submission according to the provided code review guidelines. I expect at least two paragraphs per review with detailed observations and an assigned overall numeric score. You will be graded on the quality, thoroughness, and professional tone of your review. The peer reviews are due one week after the original project due date.